GitHub

After a recent blog post where I demonstrated a really simple method to generate high resolution abstract images with neural networks and latent vectors, many people were wondering if they can use this method without the need to setup TensorFlow. Although there were some code available previously in Javascript, it wasn’t general enough to use as a tool for a digital artist.

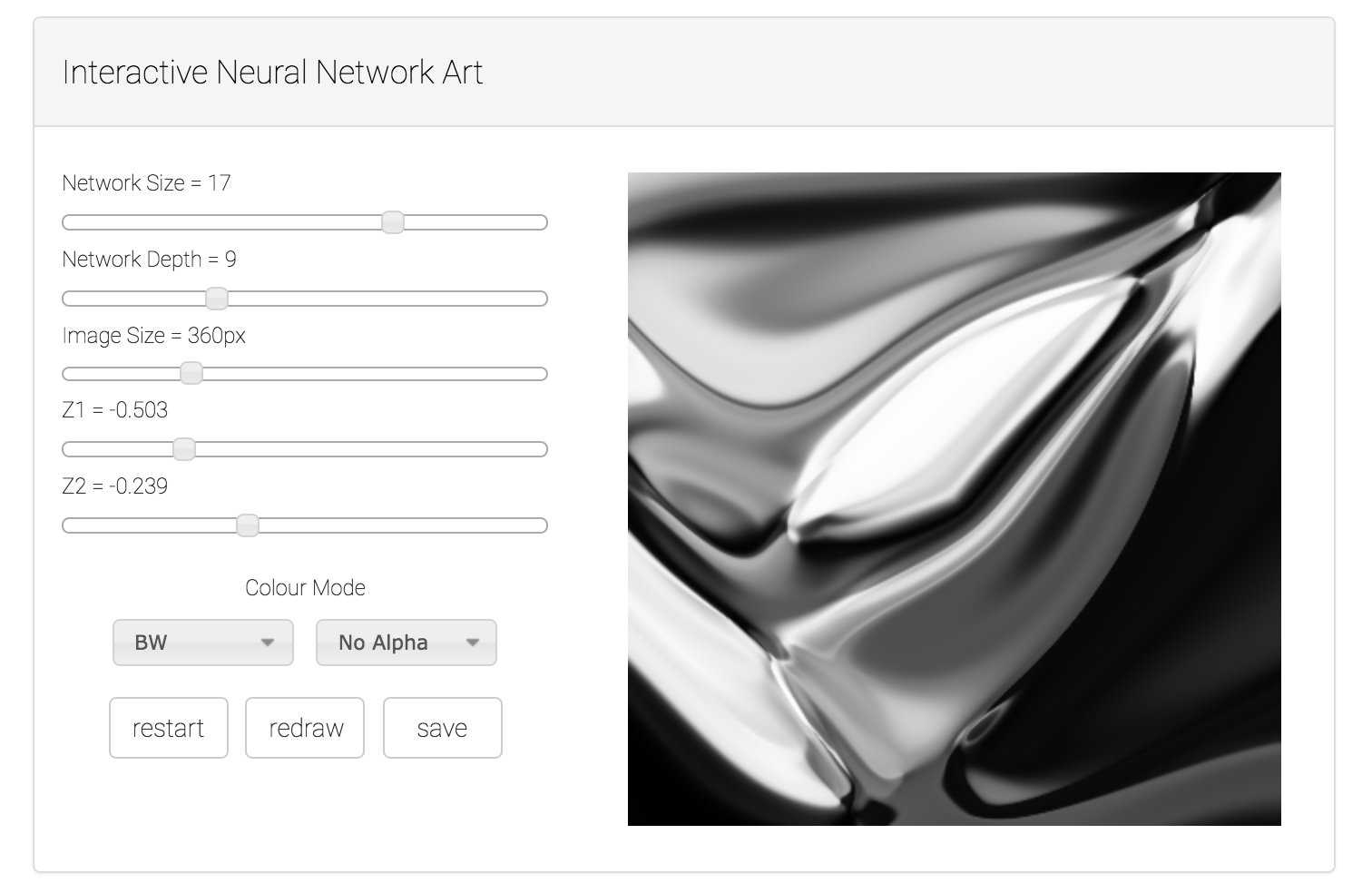

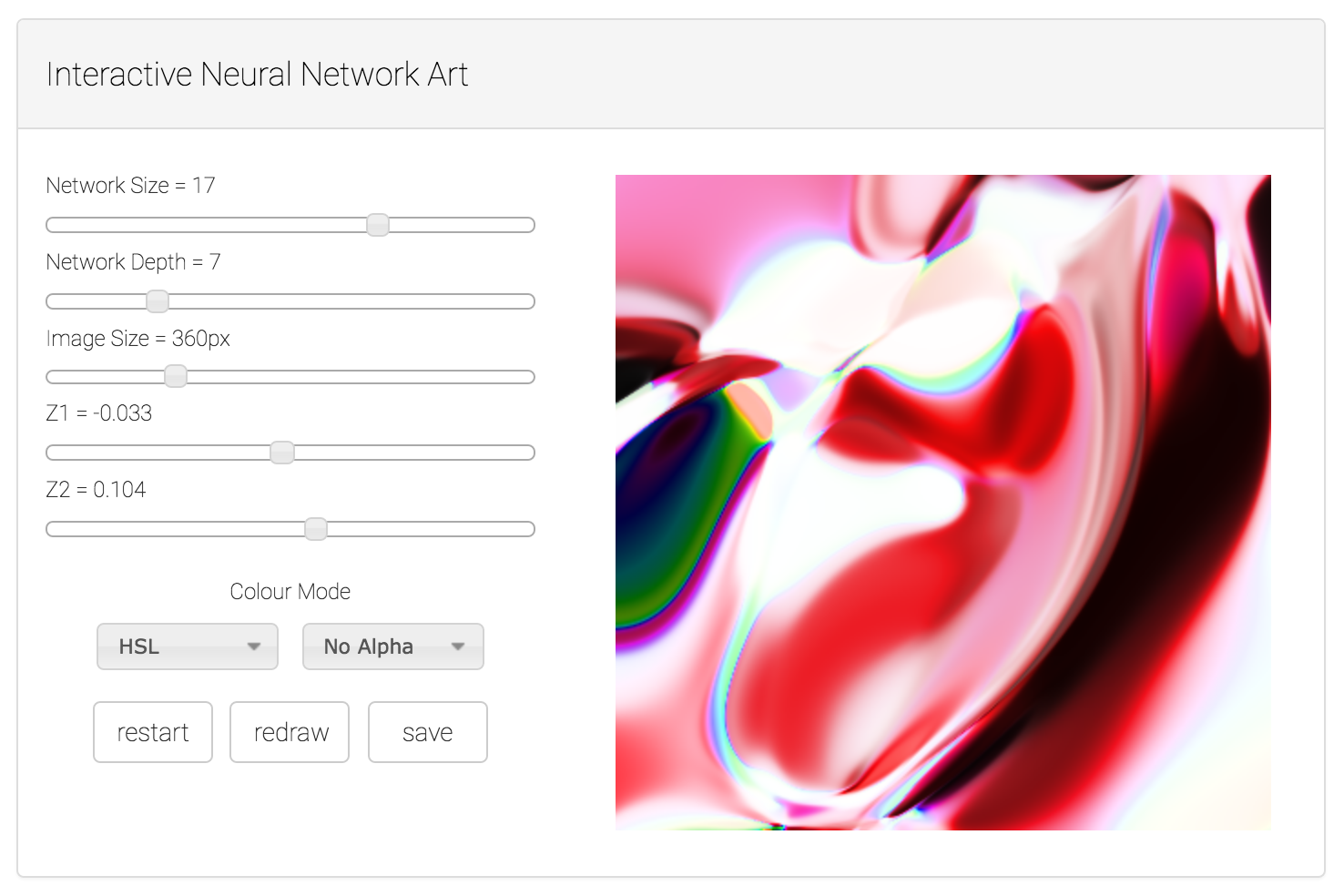

So I took the Javascript code previously written and spent an hour or two to fine tuned it into a simple web app. Karpathy’s recurrent.js library makes it really easy to implement highly customised neural networks in JS, and adopts a computational graph type of method similar to modern libraries. Using these tools, and JQuery, I incorporated latent vectors into the previous JS model, as well as options for different colour models, and alpha-transparency modes. In addition, the user is able to specify the size and depth of the generator neural network.

|

|

The generator network we have implemented takes in a latent vector Z of 2 elements only for this demo. The network is just a stack of fully-connected tanh layers, like before. The depth and size of the network, and also the image resolution of the output can all be customised in the web app.

Usage

The best way to use this web app is to start with a low resolution for faster processing, of say around 160px. You can play around with different architecture configurations by hitting the restart button, which will randomise all of the weights of the neural network and display the updated picture with the new settings.

Different colour modes are now supported. I have implemented Black and White, Red Green Blue, CMYK, HSV, and HSL colour models, so basically the output sigmoid layer of the neural network will correspond to one of these colour model layers. In addition, I have added the option to have an extra alpha-transparancy layer if we want to use an extra output to fill in the alpha channel.

After you are satisfied with the network architecture, you can also try to explore the latent space of the network by adjusting the latent vector Z1 and Z2 and hitting the redraw button to see how the image changes. After you arrive at an image you like, if the resolution is too small during the trial and error phase, you can also increase the resolution, and hit the redraw button to render the same image in larger size. The save button will save the canvase into a .png file.

Try the web app demo here.

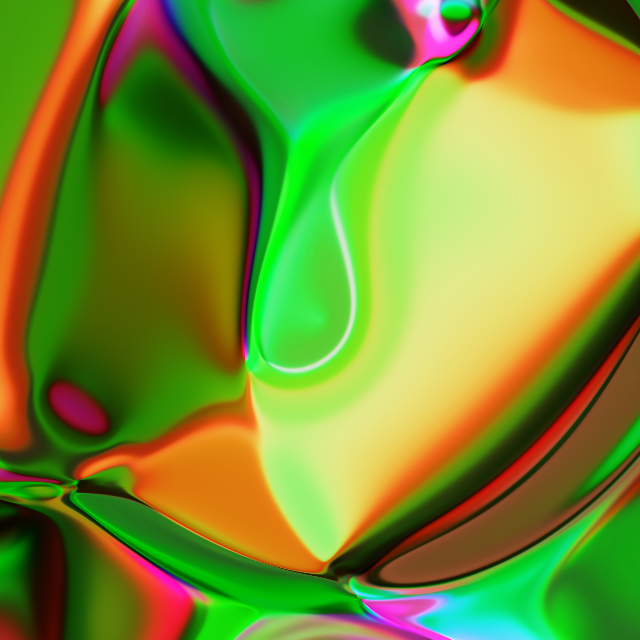

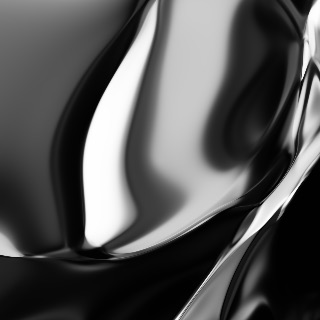

Various Examples

|

|

|

|

|

|

|

|

Future Work

The implementation is currently not optimised, meaning that I query each pixel. It will be much faster to try to batch process the entire image, or even each line, rather than each pixel at a time. I have done such optimisations with Neurogram, but that was a much more intense project, rather than just an hour hack. If we try use WebGL to optimise matrix operations, we maybe able to perform near-realtime rendering in the future.

It should also be possible to export certain simple types of TensorFlow trained networks into JSON and get recurrent.js to run those networks, given the somewhat similar computational graph nature of both libraries.